- Blog

- Wie man auf die Veo 3 API zugreift und sie nutzt: Entwicklerhandbuch (2026)

Wie man auf die Veo 3 API zugreift und sie nutzt: Entwicklerhandbuch (2026)

Vollständiges Entwicklerhandbuch für die Veo 3 API in 2026. Vertex AI, Gemini API, Authentifizierung, Python und Node.js Codebeispiele, Rate Limits und Preise.

Emma Chen · 6 min read · Apr 2, 2026

Google Veo 3 stellt einen Quantensprung in der KI-Videogenerierung dar und erzeugt fotorealistische Videos mit nativem Audio in einem einzigen Generierungsaufruf. Für Entwickler erschließt die Veo 3 API die Möglichkeit, diese Technologie direkt in Anwendungen, Plattformen und automatisierte Workflows zu integrieren. Dieses Handbuch führt Sie durch alles, was Sie für den Einstieg in die Veo 3 API im Jahr 2026 benötigen.

Was ist die Veo 3 API?

Die Veo 3 API ist Googles programmatische Schnittstelle für den Zugriff auf das Veo 3 Videogenerierungsmodell — dasselbe Modell, das über Google Flow, Vertex AI und die veo3ai.io-Plattform verfügbar ist. Über die API können Entwickler:

- Textprompts einreichen und generierte Videoclips erhalten

- Bildeingaben zusammen mit Text einreichen, um Videos aus visuellen Referenzen zu generieren

- Generierungsparameter wie Dauer, Seitenverhältnis und Qualitätseinstellungen steuern

- Native Audiogenerierung integrieren — Veo 3's Signaturmerkmal

- Batch-Workflows automatisieren für hochvolumige Produktionspipelines

Veo 3 ist derzeit Googles leistungsfähigstes Videogenerierungsmodell und zeichnet sich durch die gleichzeitige Generierung von Video und synchronisiertem Audio aus — Umgebungsgeräusche, Dialoge, Musik und Soundeffekte werden in einem einzigen Inferenzdurchgang erzeugt. Dies macht die API besonders leistungsstark für Anwendungen, die produktionsreife Medien ohne umfangreiche Nachbearbeitung benötigen.

Veo 3 vs. Veo 2: Wichtige Verbesserungen

| Funktion | Veo 2 | Veo 3 |

|---|---|---|

| Natives Audio | Nein | Ja |

| Max. Auflösung | 1080p | 1080p+ |

| Generierungsqualität | Hoch | Branchenführend |

| Physikalischer Realismus | Gut | Außergewöhnlich |

| Charakterkonsistenz | Moderat | Stark |

| Lippensynchronisation | Nein | Ja (nativ) |

Wie man auf die Veo 3 API zugreift

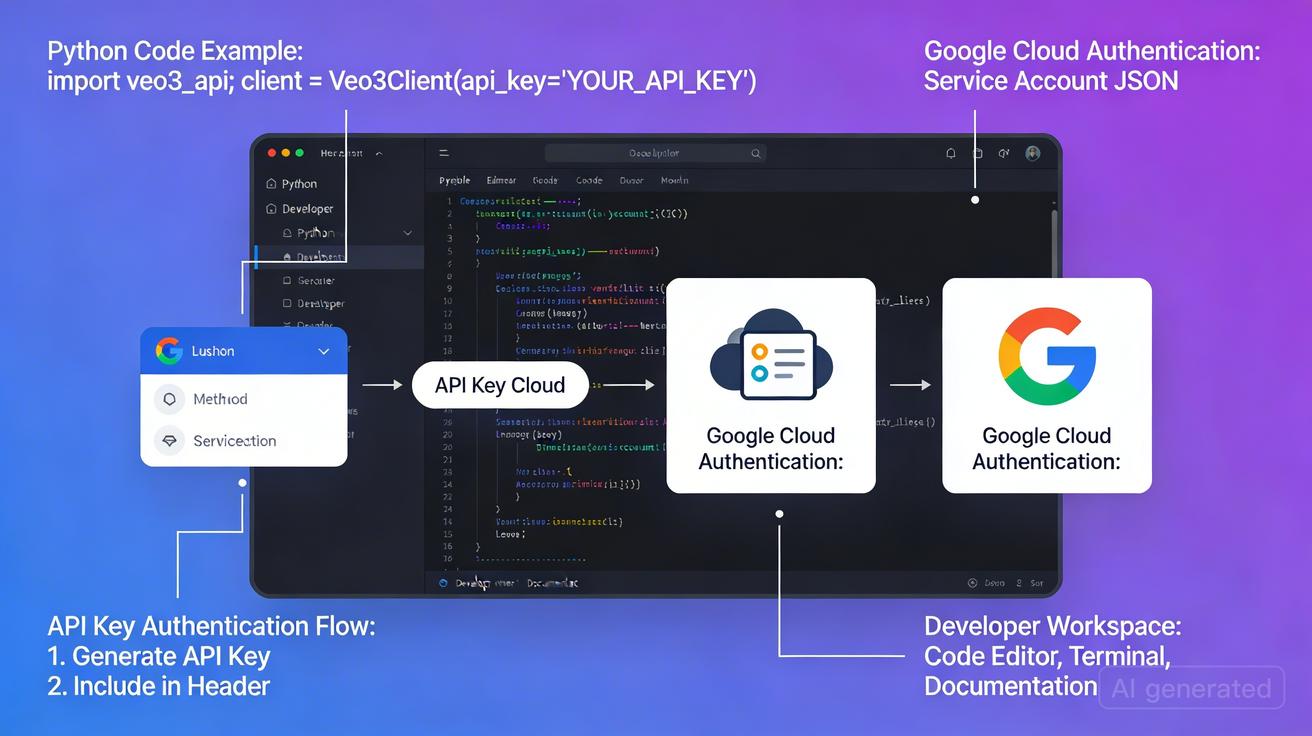

Es gibt derzeit zwei primäre Wege, um auf die Veo 3 API zuzugreifen, die jeweils für unterschiedliche Anwendungsfälle und Größenordnungen geeignet sind.

Weg 1: Google Vertex AI (Primärer API-Zugang)

Vertex AI ist Googles Enterprise-ML-Plattform auf Google Cloud und der primäre Weg für produktionsreifen Veo 3 API-Zugang.

Anforderungen:

- Ein Google Cloud-Konto (kostenlos auf cloud.google.com)

- Ein abrechnungsaktiviertes Google Cloud-Projekt

- Vertex AI API in Ihrem Projekt aktiviert

- Genehmigung der Zugriffsanfrage (Veo 3 hat eine Warteliste)

Schrittweise Einrichtung:

Schritt 1: Google Cloud-Projekt erstellen und konfigurieren

# Google Cloud CLI installieren und initialisieren

gcloud init

gcloud config set project YOUR_PROJECT_ID

# Erforderliche APIs aktivieren

gcloud services enable aiplatform.googleapis.com

gcloud services enable storage.googleapis.com

Schritt 2: Veo 3-Zugang anfordern

Navigieren Sie zur Seite der generativen Medienmodelle von Vertex AI und reichen Sie ein Zugriffsanfrageformular ein. Google überprüft diese auf die Einhaltung der Nutzungsrichtlinien. Die Genehmigung dauert in der Regel 1–5 Werktage.

Schritt 3: Service Account für Authentifizierung erstellen

# Service Account erstellen

gcloud iam service-accounts create veo3-api-client \

--display-name="Veo 3 API Client"

# Erforderliche Rollen zuweisen

gcloud projects add-iam-policy-binding YOUR_PROJECT_ID \

--member="serviceAccount:veo3-api-client@YOUR_PROJECT_ID.iam.gserviceaccount.com" \

--role="roles/aiplatform.user"

# Credentials herunterladen

gcloud iam service-accounts keys create veo3-credentials.json \

--iam-account=veo3-api-client@YOUR_PROJECT_ID.iam.gserviceaccount.com

Weg 2: Google AI Studio / Gemini API

Für Prototyping und kleinere Anwendungen bietet Google AI Studio Zugang zu Veo 3 über die Gemini API-Schnittstelle.

Anforderungen:

- Google-Konto

- API-Schlüssel von aistudio.google.com

- Veo 3-Zugang (je nach Verfügbarkeit in Ihrer Region)

Dieser Weg ist einfacher einzurichten, kann aber niedrigere Rate Limits haben.

Weg 3: Drittanbieter-API-Plattformen

Mehrere Plattformen bieten vereinfachten Zugang zu Veo 3 über einheitliche API-Endpunkte:

- veo3ai.io: Verbraucheroberfläche mit API-Zugang für Entwickler

- Replicate: Hostet Veo 3 mit einfachem REST API-Zugang

- fal.ai: Inferenz-API mit geringer Latenz und Veo 3-Unterstützung

Authentifizierung

Vertex AI (Service Account)

Die empfohlene Authentifizierungsmethode für Produktionsanwendungen sind Service-Account-Anmeldedaten über Google Cloud-Client-Bibliotheken.

export GOOGLE_APPLICATION_CREDENTIALS="/path/to/veo3-credentials.json"

export GOOGLE_CLOUD_PROJECT="your-project-id"

export GOOGLE_CLOUD_LOCATION="us-central1"

Gemini API (API-Schlüssel)

Für den Gemini API-Weg:

export GEMINI_API_KEY="your-api-key-here"

Sicherheitshinweis: Geben Sie API-Schlüssel oder Anmeldedaten niemals in die Versionskontrolle ein. Verwenden Sie Umgebungsvariablen oder einen Secrets-Manager.

Code-Beispiele

Python: Video mit Vertex AI generieren

import os

import time

from google.cloud import aiplatform

from google.protobuf import json_format

from google.protobuf.struct_pb2 import Value

def generate_veo3_video(

prompt: str,

duration_seconds: int = 8,

aspect_ratio: str = "16:9",

resolution: str = "1080p",

project_id: str = None,

location: str = "us-central1"

) -> str:

"""Video mit Veo 3 API über Vertex AI generieren."""

project_id = project_id or os.environ["GOOGLE_CLOUD_PROJECT"]

aiplatform.init(project=project_id, location=location)

endpoint = f"projects/{project_id}/locations/{location}/publishers/google/models/veo-003"

instance = {

"prompt": prompt,

"video_generation_config": {

"duration_seconds": duration_seconds,

"aspect_ratio": aspect_ratio,

"resolution": resolution,

"enable_audio": True # Veo 3's natives Audio-Feature

}

}

client = aiplatform.gapic.PredictionServiceClient(

client_options={"api_endpoint": f"{location}-aiplatform.googleapis.com"}

)

response = client.predict(

endpoint=endpoint,

instances=[json_format.ParseDict(instance, Value())],

)

operation_name = response.predictions[0]["operation_name"]

print(f"Generierung gestartet. Operation: {operation_name}")

result = poll_for_completion(client, operation_name)

video_uri = result["video_uri"]

print(f"Video generiert: {video_uri}")

return video_uri

Node.js: Video mit Gemini API generieren

import { GoogleGenerativeAI } from "@google/generative-ai";

const genAI = new GoogleGenerativeAI(process.env.GEMINI_API_KEY);

async function generateVeo3Video(prompt, options = {}) {

const { durationSeconds = 8, aspectRatio = "16:9", enableAudio = true } = options;

const model = genAI.getGenerativeModel({ model: "veo-3.0-generate-preview" });

const operation = await model.generateVideo({

prompt,

config: {

durationSeconds,

aspectRatio,

generateAudio: enableAudio,

},

});

let result = await operation.waitForCompletion();

if (result.videos && result.videos.length > 0) {

const videoUri = result.videos[0].uri;

console.log(`Video bereit: ${videoUri}`);

return videoUri;

}

throw new Error("Kein Video wurde generiert");

}

API-Anfrageparameter

| Parameter | Typ | Beschreibung |

|---|---|---|

prompt |

string | Textbeschreibung des zu generierenden Videos (erforderlich) |

duration_seconds |

integer | Videodauer: 5, 6, 7 oder 8 Sekunden |

aspect_ratio |

string | "16:9", "9:16" oder "1:1" |

resolution |

string | "720p" oder "1080p" |

enable_audio |

boolean | Native Audiogenerierung aktivieren (Standard: true) |

seed |

integer | Zufalls-Seed für Reproduzierbarkeit (optional) |

negative_prompt |

string | Elemente, die im Video vermieden werden sollen |

Rate Limits und Kontingente

Vertex AI Limits

| Typ | Wert |

|---|---|

| Anfragen pro Minute (RPM) | 5–10 (abhängig von Projektkontingenten) |

| Anfragen pro Tag (RPD) | 50–500 (neue Konten haben niedrigere Limits) |

| Max. Videodauer | 8 Sekunden pro Anfrage |

| Max. Eingabebildgröße | 10 MB |

Fehlerbehandlung bei Rate Limiting

import time

from functools import wraps

def retry_with_backoff(max_retries=3, initial_delay=10):

"""Decorator für Wiederholungen mit exponentiellem Backoff."""

def decorator(func):

@wraps(func)

def wrapper(*args, **kwargs):

delay = initial_delay

for attempt in range(max_retries):

try:

return func(*args, **kwargs)

except Exception as e:

if "429" in str(e) or "RESOURCE_EXHAUSTED" in str(e):

if attempt < max_retries - 1:

print(f"Rate Limit. Warte {delay}s...")

time.sleep(delay)

delay *= 2 # Exponentieller Backoff

else:

raise

else:

raise

return func(*args, **kwargs)

return wrapper

return decorator

Preisgestaltung

Vertex AI Preise

| Dauer | Ungefähre Kosten |

|---|---|

| 8 Sekunden (1080p) | 0,80–1,50 $ |

| 8 Sekunden (720p) | 0,50–1,00 $ |

| 5 Sekunden (1080p) | 0,50–0,95 $ |

Kostenoptimierung

- Verwenden Sie 720p beim Testen, wechseln Sie für finale Inhalte zu 1080p

- Beginnen Sie mit kurzen Clips (5 Sekunden) zum Prompt-Testen

- Cachen Sie generierte Videos — regenerieren Sie nichts, was bereits funktioniert

Praktische Anwendungsfälle

Anwendungsfall 1: Video-Content-Pipeline

class Veo3ContentPipeline:

"""Produktions-Pipeline basierend auf Veo 3 API."""

def __init__(self, project_id: str, output_bucket: str):

self.project_id = project_id

self.output_bucket = output_bucket

def generate_product_video(

self,

product_name: str,

product_description: str,

target_platform: str = "instagram"

) -> str:

"""Marketing-Video für ein Produkt erstellen."""

aspect_ratios = {

"instagram": "1:1",

"tiktok": "9:16",

"youtube": "16:9",

}

prompt = f"""

Professional product showcase video featuring {product_name}.

{product_description}

Clean white background, studio lighting, slow rotation,

high-end commercial aesthetic.

"""

return generate_veo3_video(

prompt=prompt,

aspect_ratio=aspect_ratios.get(target_platform, "16:9"),

duration_seconds=8

)

Anwendungsfall 2: Personalisierte Videobegrüßungen

def generate_personalized_greeting(

recipient_name: str,

occasion: str,

style: str = "warm"

) -> str:

"""Personalisierte Video-Grußkarten generieren."""

style_descriptions = {

"warm": "warm golden tones, soft bokeh, intimate atmosphere",

"celebratory": "confetti, bright colors, festive energy",

"professional": "clean modern design, subtle motion graphics"

}

prompt = f"""

Personalized {occasion} greeting.

{style_descriptions.get(style, style_descriptions["warm"])}

Abstract background with gentle particle effects.

"""

return generate_veo3_video(prompt=prompt, duration_seconds=6)

Vergleich der Veo 3 API mit Alternativen

| Anbieter | API-Zugang | Natives Audio | Qualität | Kosten/Clip |

|---|---|---|---|---|

| Google Veo 3 (Vertex AI) | ✅ | ✅ | Führend | 0,80–1,50$ |

| Runway Gen-4 | ✅ | ❌ | Hoch | 0,10–0,50$ |

| Kling AI API | ✅ | ❌ | Hoch | 0,05–0,30$ |

| Pika API | ✅ | ❌ | Mittel | 0,05–0,20$ |

Veo 3 zeichnet sich durch native Audiogenerierung und führende Bildqualität aus, wenn auch zu einem höheren Preis. Für Anwendungen, bei denen Qualität und natives Audio kritisch sind, ist die Veo 3 API die offensichtliche Wahl.

Best Practices für den Produktionseinsatz

- Wiederholungen mit Backoff implementieren: Behandeln Sie immer 429-Fehler mit exponentiellem Backoff

- Ergebnisse cachen: Speichern Sie generierte Videos in persistentem Storage

- Kosten überwachen: Richten Sie Budget-Alarme in Google Cloud ein

- Mit kurzen Clips testen: Entwickeln Sie mit 5-Sekunden-Clips in 720p zum Sparen

- Prompt-Vorlagen verwenden: Erstellen Sie eine Bibliothek bewährter Prompt-Vorlagen

- Prompts versionieren: Verfolgen Sie, welche Prompt-Versionen die besten Ergebnisse liefern

Fazit

Die Veo 3 API eröffnet beispiellose Möglichkeiten für Entwickler, die Videoanwendungen der nächsten Generation erstellen. Native Audiogenerierung, außergewöhnliche Bildqualität und Googles robuste Infrastruktur machen sie zu einem leistungsstarken Werkzeug für Produktions-Workflows.

Beginnen Sie mit Google AI Studio für Prototyping, wechseln Sie zu Vertex AI für die Produktionsskalierung. Investieren Sie Zeit in die Erstellung effektiver Prompt-Vorlagen — das zahlt sich in Qualität und Konsistenz der Ergebnisse aus.

Schlüsselwörter: Veo 3 API, Google Veo API, Veo 3 Entwickler API, Veo 3 API-Zugang, Veo 3 API-Handbuch 2026

Related Articles

Continue with more blog posts in the same locale.

Veo 3 Reisevideo-Leitfaden 2026

Veo 3 Reisevideo-Leitfaden 2026

Read article

Veo 3 für Fashion-Video 2026

Veo 3 für Fashion-Video 2026

Read article

Veo 3 Lebensmittel- und Getränkevideo-Leitfaden 2026

Veo 3 Lebensmittel- und Getränkevideo-Leitfaden 2026

Read article