- Blog

- Seedance 2.0 Free vs Veo 3 Free 2026: Access, Quality, and Limits

Seedance 2.0 Free vs Veo 3 Free 2026: Access, Quality, and Limits

A practical 2026 comparison of Seedance 2.0 Free and Veo 3 Free access, output quality, limits, workflows, and when to choose each free AI video path.

Emma Chen · 14 min read · May 2, 2026

Seedance 2.0 Free vs Veo 3 Free 2026: Access, Quality, and Limits

The search demand behind seedance 2.0 free is practical, not theoretical. People are not only asking whether an AI video model sounds impressive. They want to know how to get access, what they can make with limited credits, which prompts are worth testing, and how to avoid wasting a day on clips that cannot be edited or published. This guide is written for creators, marketers, students, indie founders, and small teams testing AI video without committing to a paid plan who need a clear 2026 workflow for free access comparison.

The safest way to think about this topic is simple: use AI video generation as a controlled production system. A good workflow defines the job, prepares references, writes prompts, tests output, reviews risk, and only then scales. That matters whether you are comparing free tools, planning a longform storyboard, or creating app preview clips. The promise of this guide is to help you choose the safest free workflow before spending credits or production time.

Target keywords covered in this guide include seedance 2.0 free, veo 3 free, seedance free vs veo 3, free AI video generator comparison. The article does not invent private benchmark numbers or claim that access rules are permanent. AI video products change quickly, so the durable advantage is a workflow you can repeat and verify. For related Veo 3 production methods, see Veo 3 camera control prompts, Veo 3 image reference workflow, Veo 3 native audio prompt guide.

Quick answer

For free access comparison, the winning approach is not to chase the longest prompt or the flashiest one-off clip. The winning approach is to define a narrow video job, generate short controlled scenes, keep the important facts outside the model when accuracy matters, and evaluate the result against a checklist. In this specific case, the main use is testing short clips, comparing prompt behavior, learning video generation basics, and deciding which tool deserves paid production work. The main risk is assuming any free tier is unlimited, current forever, or suitable for every commercial workload.

A useful first test has five pieces: one audience, one goal, one visual reference or style rule, one motion instruction, and one review criterion. If the output cannot pass the review criterion, do not scale the concept. Rewrite the prompt, simplify the scene, or change the source material. This is slower than clicking generate repeatedly, but it saves credits and creates assets that survive editing.

Decision table

| Decision point | Practical guidance | What to avoid |

|---|---|---|

| Best first test | Seedance 2.0 Free often fits quick text-to-video or image-to-video exploration. | Veo 3 Free is useful when you specifically want to test a Veo-style prompt workflow. |

| Access risk | Check the current Seedance login page and credit rules before planning a campaign. | Check the current Veo 3 account path, region availability, and daily credit behavior. |

| Quality focus | Good for fast concept clips, product motion ideas, and social-style scene drafts. | Good for learning cinematic prompt control and testing Veo-oriented workflows. |

| Limit mindset | Treat free credits as research capacity, not a guaranteed production budget. | Treat free generations as validation capacity, not unlimited publishing inventory. |

| Best upgrade trigger | Upgrade when you have repeatable scenes, brand assets, or client deliverables. | Upgrade when you need reliable batch testing, higher control, or commercial consistency. |

Use the table as a pre-production filter. If the project does not have a clear source of truth, a clear review rule, and a clear export destination, pause before generating. Most weak AI video projects fail because the prompt tries to solve strategy, creative direction, product accuracy, and editing structure at the same time.

Practical workflow

- Confirm current free access. Use this as a checkpoint before moving to the next generation.

- Pick one test goal. Use this as a checkpoint before moving to the next generation.

- Run one baseline prompt in both tools. Use this as a checkpoint before moving to the next generation.

- Score quality and editability. Use this as a checkpoint before moving to the next generation.

- Choose the paid workflow only after repeatable results. Use this as a checkpoint before moving to the next generation.

Each checkpoint should produce a small artifact. That artifact can be a prompt, a screenshot folder, a shot card, a QA checklist, or a simple edit plan. The point is to make the workflow inspectable. If a teammate joins later, they should understand why each clip exists and how it supports the final video.

Step 1: define the video job before opening the generator

Start by writing a one-sentence job statement: 'This video helps [audience] understand [action] so they can [outcome].' For seedance 2.0 free, this sentence prevents the project from drifting into a general AI video showcase. The more precise the job, the easier it is to judge whether a generated clip is useful.

A good job statement includes the channel. A blog embed, product landing page hero, app store preview, paid social test, onboarding modal, and customer success email all need different pacing. If the same source scenes will be reused across channels, plan the variants early. Leave enough visual space for captions, avoid critical details at the edge of the frame, and do not depend on generated text being readable.

The job statement should also include a failure condition. For example: the clip fails if the interface changes shape, if the product claim cannot be supported, if the character identity changes across shots, if the free access message implies unlimited use, or if the scene cannot be trimmed into a clean edit. Failure conditions make review faster because the team does not debate taste when the asset is factually unusable.

Step 2: prepare source material and boundaries

AI video gets stronger when the input material is specific. Prepare screenshots, product references, approved brand colors, sample captions, scene notes, and examples of motion you like. Also prepare negative boundaries: no fake metrics, no invented UI, no random logos, no unreadable claims, no extra characters, no surprise text, and no changes to the product screen unless the clip is only conceptual.

For reference-based work, label assets with plain names. Use names such as start-screen, action-screen, success-screen, brand-style, and forbidden-examples. This sounds basic, but it helps prompt writing because every scene can refer to the correct asset. When a generation fails, you can isolate whether the issue came from the prompt, the reference, or the requested motion.

If the project involves a real product, remove private data before using screenshots. Replace customer names, emails, tokens, revenue figures, internal roadmap labels, and unreleased features. A beautiful clip is not usable if it leaks information or shows a feature that users cannot access.

Step 3: use prompt templates instead of improvising

Template 1:

A clean product demo clip of [product] solving [problem] for [user], one subject, one action, stable camera, natural light, no readable fake UI text.

Template 2:

A short social video opening where [user] discovers [benefit], realistic motion, concise visual hook, simple background, no exaggerated claims.

Template 3:

Image-to-video: animate this reference image with subtle camera movement, preserve product shape and colors, no new text, no distorted interface elements.

These templates are intentionally constrained. They do not ask for a whole campaign in one instruction. They ask for one scene that can be reviewed. Once a scene works, create variations by changing one variable at a time: camera movement, lighting, subject action, scene length, or reference image. If you change everything at once, you lose the ability to learn from the result.

Step 4: build a comparison or checklist scorecard

A scorecard turns seedance 2.0 free from a subjective experiment into a production decision. Rate each generation on a simple one-to-five scale for prompt match, visual clarity, continuity, editability, product accuracy, brand safety, and channel fit. Do not only score beauty. The most beautiful clip can still fail if it creates editing problems or misrepresents the product.

Here is a practical checklist you can copy into a spreadsheet:

- Does the clip match the one-sentence job?

- Is the main subject clear in the first two seconds?

- Are product screens, logos, and objects stable enough for the use case?

- Does the clip avoid fake data, fake claims, or unsupported outcomes?

- Can the editor trim the beginning and end without losing meaning?

- Is there room for captions, UI callouts, or subtitles?

- Does the scene connect naturally to the previous and next shot?

- Would a first-time viewer understand what action to take next?

Step 5: review the first generation like an editor

The first generation is not the final asset. Review it like an editor assembling a timeline. Look for the first usable frame, the last usable frame, the strongest motion moment, and any part that would confuse a viewer. Save notes in the same language every time: keep, trim, regenerate, or reject. Consistent labels make batch work faster.

When a clip is close but not usable, avoid rewriting the entire prompt. Identify the exact failure. If the camera moved too much, reduce the camera instruction. If the interface changed, emphasize stable layout and no invented elements. If the clip feels generic, add a stronger source reference or user context. If the scene is too busy, remove secondary actions.

For multi-shot projects, check the edit sequence after every two or three clips. Do not wait until twenty clips are generated to discover that nothing cuts together. AI video continuity is easier to protect when each new shot is judged against the timeline, not against a standalone preview window.

Prompt variations for production testing

Create controlled variations around the same idea. Below are practical variation types that work across comparison, storyboard, and app preview projects:

- Camera variation: static tripod, slow push-in, handheld documentary feel, screen-level tracking, or overhead workspace shot.

- Pacing variation: immediate action, one-second setup, before-and-after reveal, or result-first opening.

- Reference variation: screenshot-led, product photo-led, character reference-led, or moodboard-led.

- Channel variation: vertical social crop, landscape blog embed, square ad preview, or app store-safe device framing.

- Risk variation: strict product accuracy, conceptual mood only, no readable text, or caption-ready blank space.

The best version is often the most controlled version, not the most dramatic version. If the goal is education, onboarding, or product trust, viewers need clarity before spectacle. Use cinematic motion only when it supports the message.

Internal linking and next steps

If your next bottleneck is camera movement, read Veo 3 camera control prompts at /blog/veo-3-camera-control-prompts-2026. If your next bottleneck is reference consistency, read Veo 3 image reference workflow at /blog/veo-3-image-reference-workflow-2026. If your next bottleneck is audio, dialogue, or sound design, read Veo 3 native audio prompt guide at /blog/veo-3-native-audio-prompt-guide-2026.

The practical next step is to create one small test pack: three prompts, three generated clips, one scorecard, and one edited draft. That is enough to decide whether the workflow is worth scaling. If the test pack fails, fix the workflow before increasing volume.

Common mistakes

Mistake 1: treating free or test access as a production guarantee

Access, credits, queue speed, export rules, and commercial terms can change. Always check the current product page and account state before promising a deadline to a client or team.

Mistake 2: asking for too many scenes in one prompt

A large prompt may produce something impressive, but it is harder to repair. Short scene prompts are easier to compare, regenerate, and edit into a coherent sequence.

Mistake 3: relying on generated readable text

Important captions, prices, product names, disclaimers, and calls to action should be added in editing. Generated text is often unreliable and can create compliance problems.

Mistake 4: skipping the review checklist

Without a checklist, teams approve the clip that looks newest rather than the clip that solves the job. Keep the scorecard close to the prompt and update it after every generation.

FAQ

Is Seedance 2.0 Free better than Veo 3 Free?

It depends on the job. Seedance 2.0 Free can be better for quick exploratory AI video tests, while Veo 3 Free is useful when you want to practice a Veo-oriented prompt workflow. Always check current access and limits before planning production.

Can I use free AI video credits for client work?

Use caution. Free credits are best for testing, briefs, and drafts. For client delivery, verify current commercial terms, watermark behavior, export quality, and account limits for the specific tool you use.

What should I compare first: access, quality, or limits?

Start with access because a tool you cannot reliably access cannot support a workflow. Then compare quality, editability, generation speed, and practical credit limits.

Does Veo 3 Free mean unlimited Veo 3 video generation?

No. Free access usually has limits, and those limits can change. Treat any free plan as a testing path unless the current product page explicitly confirms otherwise.

What is the best fair test prompt?

Use a short prompt with one subject, one action, one environment, and one camera instruction. Avoid asking for a complex multi-scene ad on the first comparison.

Should I choose the tool with the highest single best clip?

Not necessarily. Choose the tool that produces repeatable, editable results across several prompts, because production reliability matters more than one lucky generation.

Final recommendation

For seedance 2.0 free, build a small controlled workflow before scaling. Define the job, prepare references, use constrained prompt templates, score results, and keep the final edit honest. The creators who win with AI video in 2026 will not be the people who generate the most clips. They will be the people who turn generation into a repeatable production system.

Production notes for teams

Create a shared folder for every project. Put prompts, source images, generated clips, rejected clips, scorecards, final exports, and notes in separate subfolders. This prevents accidental reuse of weak generations and makes later optimization possible.

Name every generated clip with the date, scene number, prompt version, and review status. A simple name such as scene-03-v02-keep is more useful than a random download title. When a stakeholder asks why a clip was chosen, the naming system gives you an audit trail.

Keep a prompt changelog. Write one line after each variation explaining what changed and what improved or broke. Over time, this becomes a private prompt library that is more valuable than generic prompt lists because it reflects your exact audience, product, and channel constraints.

Separate creative review from factual review. A designer can review mood, motion, and composition. A product owner should review interface truth. A marketer should review the claim and CTA. A legal or policy reviewer may be needed for regulated industries. Do not ask one person to catch every risk.

Export a low-resolution draft before spending time on polish. The draft reveals whether the story actually works. If the sequence is confusing at draft quality, better color, sharper images, or more dramatic motion will not fix the strategic problem.

After publishing, keep measuring. For a blog article, monitor impressions, clicks, and query match. For a product video, monitor watch rate, click-through, activation, or support tickets. For a launch clip, compare variants by channel. AI video is not finished when it looks good; it is finished when it performs the job it was made for.

When the first draft is close to usable, improve it with a narrow second pass instead of starting over. Keep the approved scene order, preserve the best frame, and change only the weak variable. This habit protects continuity, reduces review fatigue, and gives the team a cleaner record of what actually improved performance.

Related Articles

Continue with more blog posts in the same locale.

What is Google Veo 4?

Complete overview of Google Veo 4 AI video generator features, capabilities, and improvements over Veo 3.

Read article

How to Use Google Veo 4

Step-by-step guide to using Google Veo 4 AI video generator. Learn prompts, settings, and best practices for creating stunning AI videos.

Read article

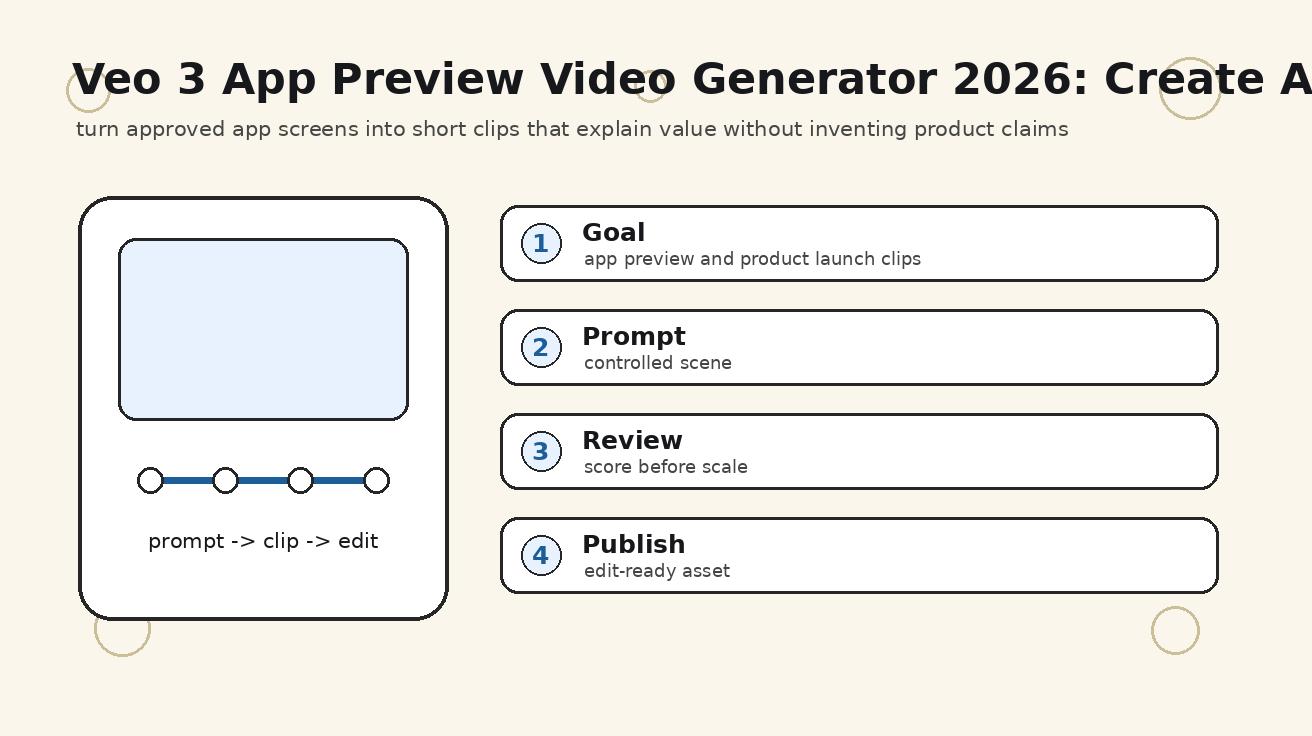

Veo 3 App Preview Video Generator 2026: Create App Store and Product Clips

A practical Veo 3 app preview video generator workflow for app store clips, product launch videos, mobile app promos, screenshots, prompts, and QA checks.

Read article